Please Note: This blog was originally published as Integrating Perspectives for a Quality Evaluation Design at www.evalu-ate.org August 2, 2017

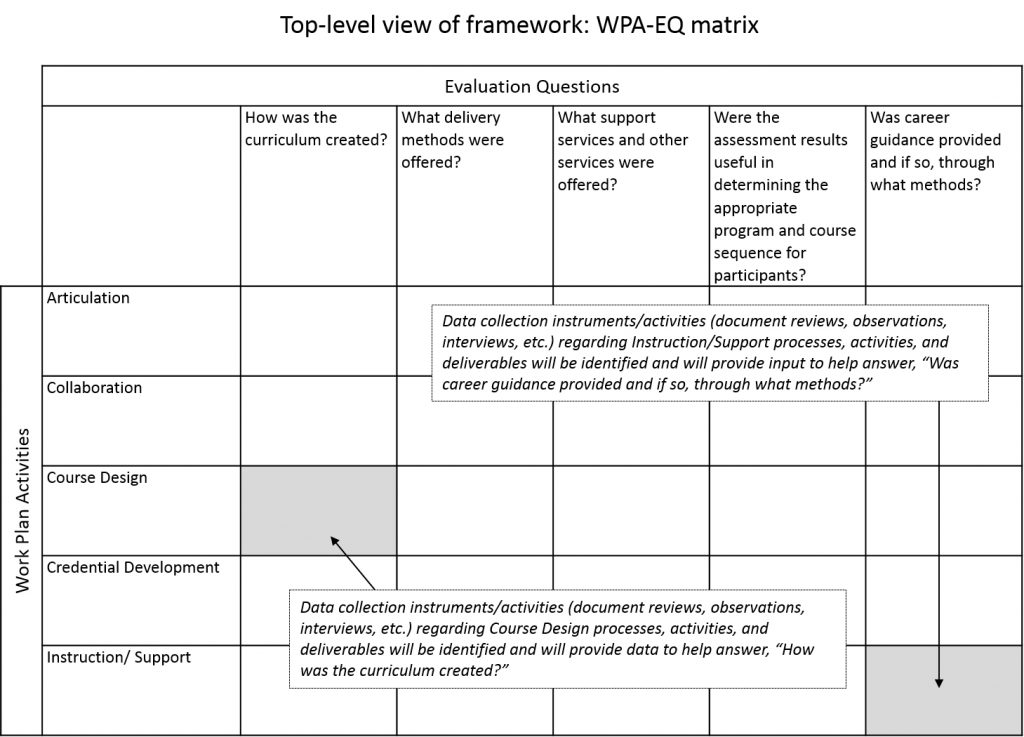

Designing a rigorous and informative evaluation depends on communication with program staff to understand planned activities and how those activities relate to the program sponsor’s objectives and the evaluation questions that reflect those objectives (see white paper related to communication). At NC State Industry Expansion Solutions, we have worked long enough on evaluation projects to know that such communication is not always easy because program staff and the program sponsor often look at the program from two different perspectives: The program staff focus on work plan activities (WPAs), while the program sponsor may be more focused on the evaluation questions (EQs). So, to help facilitate communication at the beginning of the evaluation project and assist in the design and implementation, we developed a simple matrix technique to link the WPAs and the EQs (see below).

Click to enlarge

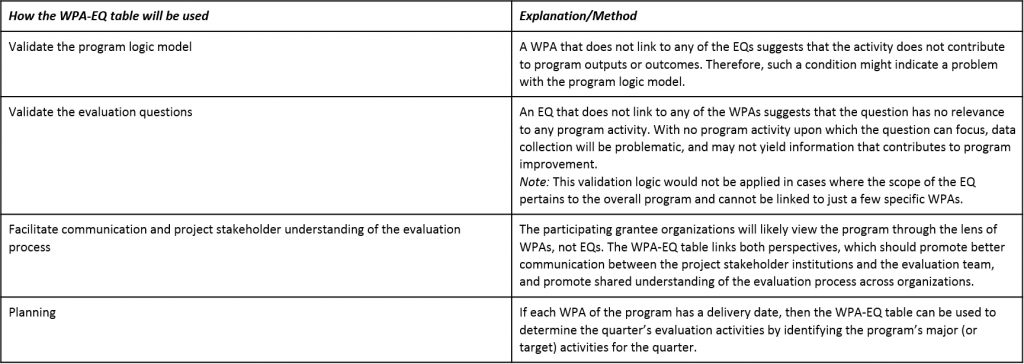

For each of the WPAs, we link one or more EQs and indicate what types of data collection events will take place during the evaluation. During project planning and management, the crosswalk of WPAs and EQs will be used to plan out qualitative and quantitative data collection events.

Click to enlarge

The above framework may be more helpful with the formative assessment (process questions and activities). However, it can also enrich the knowledge gained by the participant outcomes analysis in the summative evaluation in the following ways:

Understanding how the program has been implemented will help determine fidelity to the program as planned, which will help determine the degree to which participant outcomes can be attributed to the program design.

Details on program implementation that are gathered during the formative assessment, when combined with evaluation of participant outcomes, can suggest hypotheses regarding factors that would lead to program success (positive participant outcomes) if the program is continued or replicated.

Details regarding the data collection process that are gathered during the formative assessment will help assess the quality and limitations of the participant outcome data, and the reliability of any conclusions based on that data.

So, for us this matrix approach is a quality-check on our evaluation design that also helps during implementation. Maybe you will find it helpful, too.

—

Dominick Stephenson is the Assistant Director, Research Development and Evaluation for Industry Expansion Solutions’ Evaluation Solutions Group. Dominick is responsible for managing evaluation functions throughout the project including client engagement, evaluation design, data collection, instrument design and report writing. Dominick is a graduate of East Carolina University with an M.A.Ed. in Adult Education and a B.S.B.A. in Management Information Systems.

John Dorris is the Director of Research Development and Evaluation at NC State Industry Expansion Solutions and the IES Evaluation Solutions Group. He provides leadership on strategy, research and data analysis efforts related to workforce development, organizational learning, STEM, and emerging trends in evaluation. Dr. Dorris holds a B.S. degree in Business Administration from the University of Tennessee-Knoxville, two M.S.degrees (Business Administration and Statistics) from Penn State University, and an Ed.D. in Learning and Leadership from the University of Tennessee-Chattanooga.